Why Matrix Fails in Military Operations: A Technical Deep Dive into Cryptographic Brittleness and Design Flaws

Published: March 5, 2026 | Reading time: 12-14 minutes

The adoption of Matrix and Element across military and government networks has been celebrated as a major victory for end-to-end encryption and digital sovereignty. Yet a closer examination of how these systems perform under active conflict conditions reveals a fundamental mismatch between civilian design principles and the operational reality of modern warfare. This isn’t a critique of Matrix as a civil communications platform. Rather, it’s an examination of what happens when a system designed for privacy-conscious civilians encounters threats for which it was never architected.

Recent cryptographic analysis, particularly the work detailed in soatok’s examination of vulnerabilities in vodozemac, has exposed fault lines that should concern anyone deploying Matrix in high-stakes security operations. The issues extend beyond cryptography into operational security, administrative privilege models, and the role of human error in systems designed with too many moving parts.

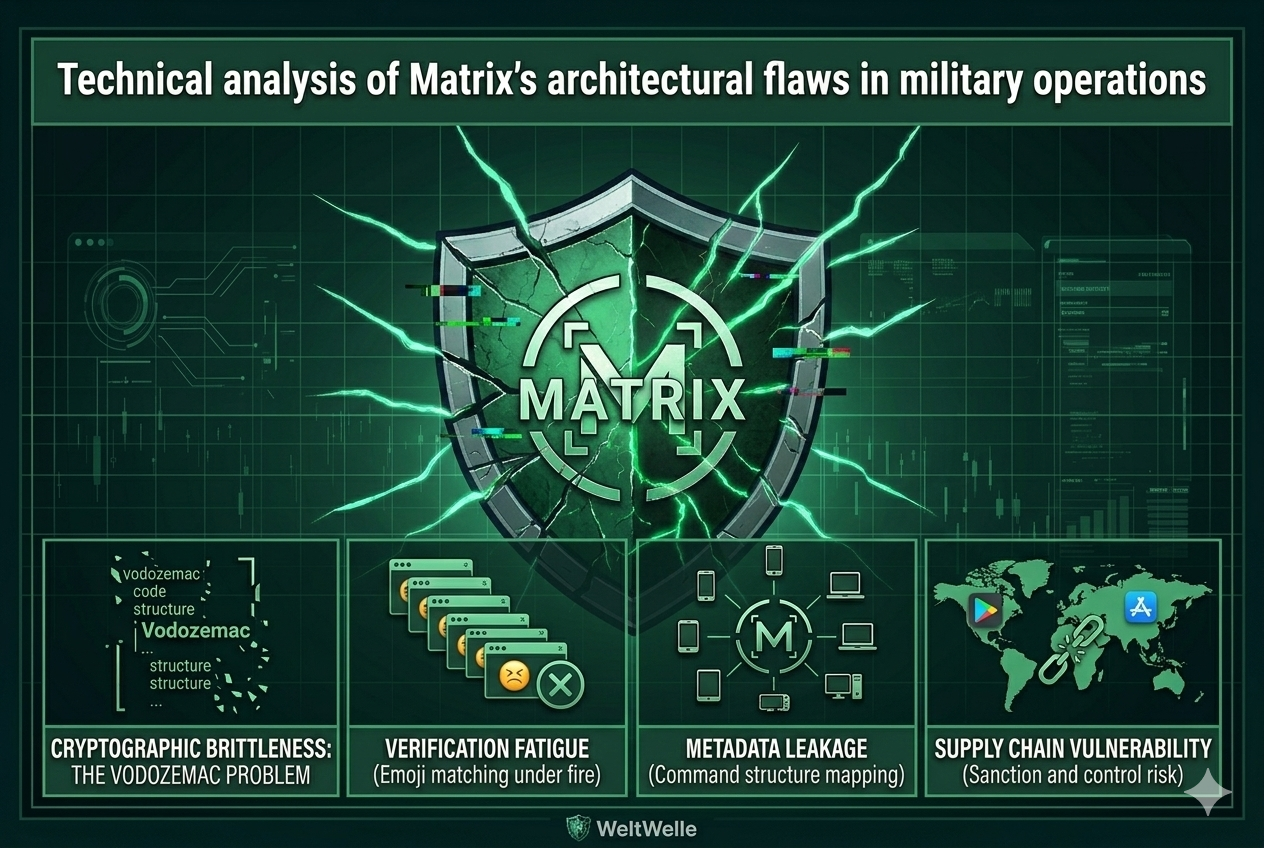

Cryptographic Brittleness: The Vodozemac Problem

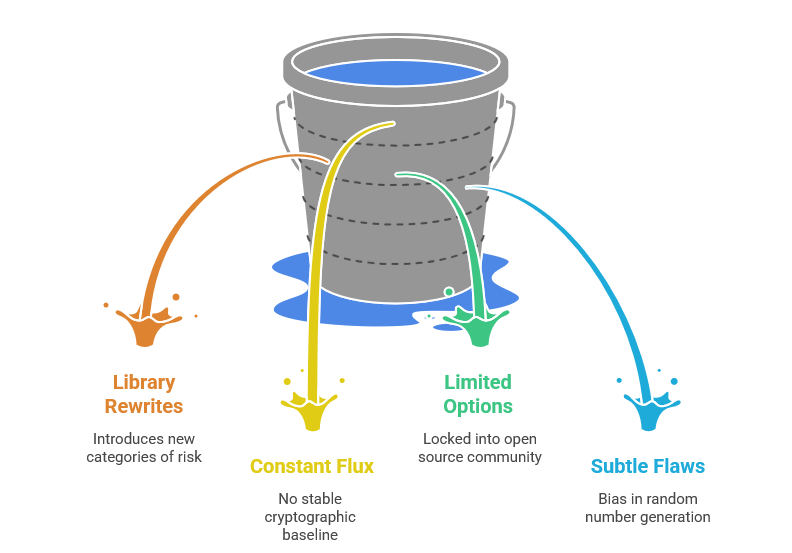

The migration from libolm to vodozemac, Rust’s cryptographic library for Matrix, was presented as a security improvement. In reality, it introduced a new category of risk. The library underwent significant rewrites and now maintains a growing set of documented issues that allow attackers to mount key extraction attacks and session integrity violations in ways that were not previously possible.

The core issue isn’t that vodozemac is poorly written. Rather, it’s that Matrix’s approach to cryptographic updates creates what we might call a “constant flux” problem. With hundreds of contributors across dozens of organizations and jurisdictions modifying the codebase continuously, there is no stable cryptographic baseline. A military unit cannot simply deploy Matrix, validate its security properties, and maintain that validation indefinitely. Instead, every patch, every update from the open source community brings the possibility of new attack vectors.

This becomes more than a technical concern when you understand the operational context. A military unit cannot quickly rotate to a different cryptographic library if an issue emerges. They cannot fork the project and maintain their own secure version without the resources of a dedicated cryptographic team. They are locked into accepting whatever the open source community produces, including contributions from actors in adversarial nations or those influenced by state sponsors.

Consider this scenario: A single commit from a contributor claiming to fix a performance issue is merged into vodozemac. The change introduces a subtle bias in random number generation. This bias goes undetected in testing because it only manifests under specific operational conditions that civilian testers never encounter. Meanwhile, an intelligence agency with advanced cryptanalysis capabilities discovers the flaw and quietly exploits it. By the time the military discovers the problem, years of communications have been compromised.

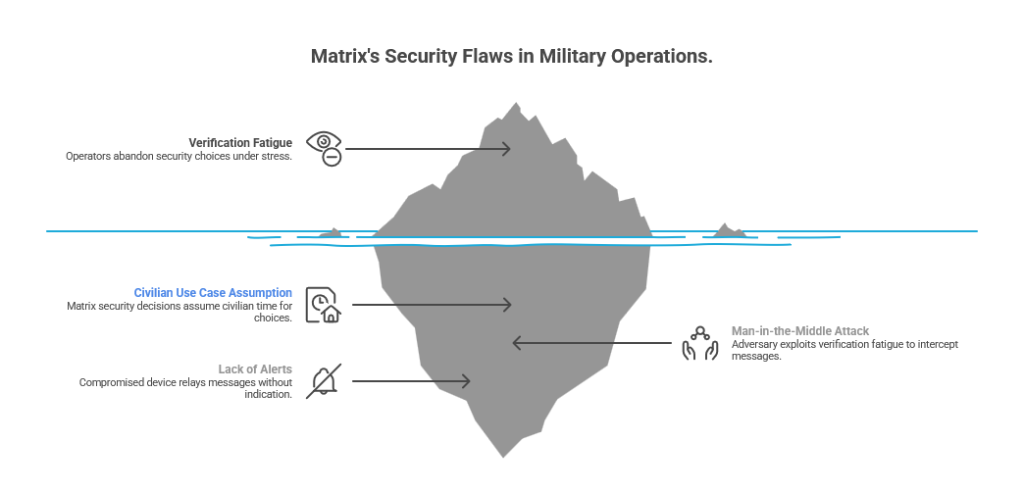

Verification Fatigue as an Attack Surface

In Matrix, the cross-signing and emoji verification process is presented as essential to security. In military practice, it becomes a liability. When a new device joins a secure channel with 500 participants, the system prompts for verification. This means comparing a sequence of emoji across devices to confirm the connection is legitimate.

In theory, this is good security practice. In the reality of field operations, it’s a humanitarian disaster waiting to happen. An officer directing a tactical operation under fire is not going to carefully verify all ten emoji matches for a new participant. They will either skip the verification entirely or perform a cursory check that would satisfy an attentive observer but represents no actual security validation.

This creates what cryptographers call “verification fatigue.” The system offers many security choices, most of which the operator abandons under stress. An adversary who understands this behavior can exploit it systematically. By positioning a Man-in-the-Middle attack at a network chokepoint, an adversary can present spoofed verification prompts that appear legitimate enough for a stressed operator to accept.

The attack unfolds quietly. The compromised device now relays all future messages to the attacker. But there are no alerts, no suspicious logs, no indication that anything has gone wrong. The officer continues to believe they are communicating securely with their unit while every word is being intercepted.

The fundamental problem is that Matrix made security decisions that assume civilian use cases where operators have time to make careful choices. Military operations do not provide this luxury.

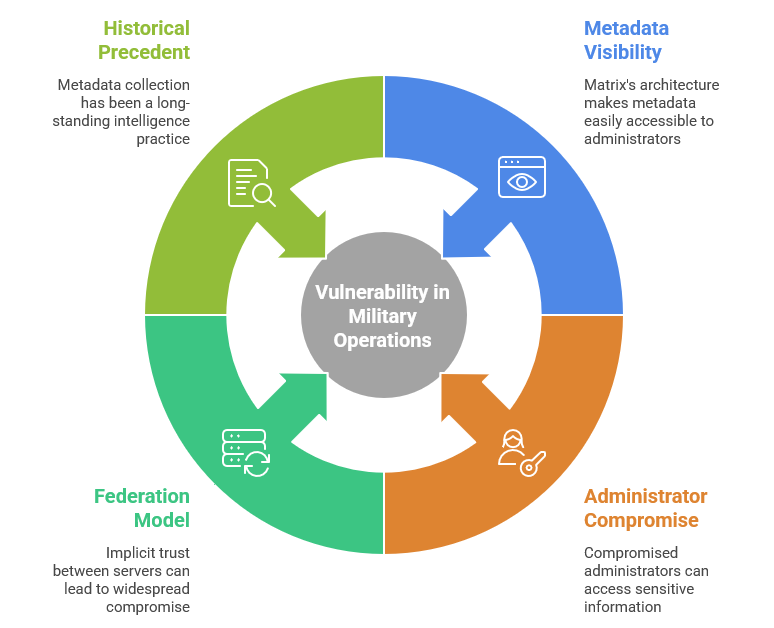

Metadata Leakage: The Intelligence Goldmine

Most discussions about encrypted communications focus on message content. In military operations, metadata is often more valuable than the messages themselves. Matrix’s architecture makes metadata extraordinarily visible to anyone with administrative access to the server.

An administrator of a Matrix server can observe in real time who is communicating with whom, how frequently these communications occur, what time of day the communication is heaviest, and what device types are being used. All of this is visible even if every single message is encrypted with perfect forward secrecy.

Now consider what an intelligence operation can do with this information. If the adversary manages to compromise a Matrix server administrator through recruitment or exploitation, they gain access to a comprehensive map of your command structure without ever needing to decrypt a single message.

Operations

The intelligence value of metadata was established long before encrypted communications became widespread. The NSA’s metadata collection programs targeted communications metadata because it reveals operational patterns that are often more useful than content. A signal analyst observing that Battalion A communicates with Battalion B every evening at 22:00 can infer operational planning, can estimate shift rotations, can even predict when offensive operations are likely to commence.

Furthermore, an administrator can observe which geographic locations devices are connecting from, what times people log in and out, how many hours they spend in a channel. An intelligence analyst with access to this metadata can estimate stress levels, predict staff changes, and identify key personnel simply by observing who maintains presence in which channels.

Matrix’s federation model compounds this problem. The system creates implicit trust relationships between servers. If one federated server is compromised, it can potentially gather metadata about activity on other servers. A carefully placed compromise in the federation can act like a tap on multiple channels simultaneously.

The Anti-Insider Architecture Problem

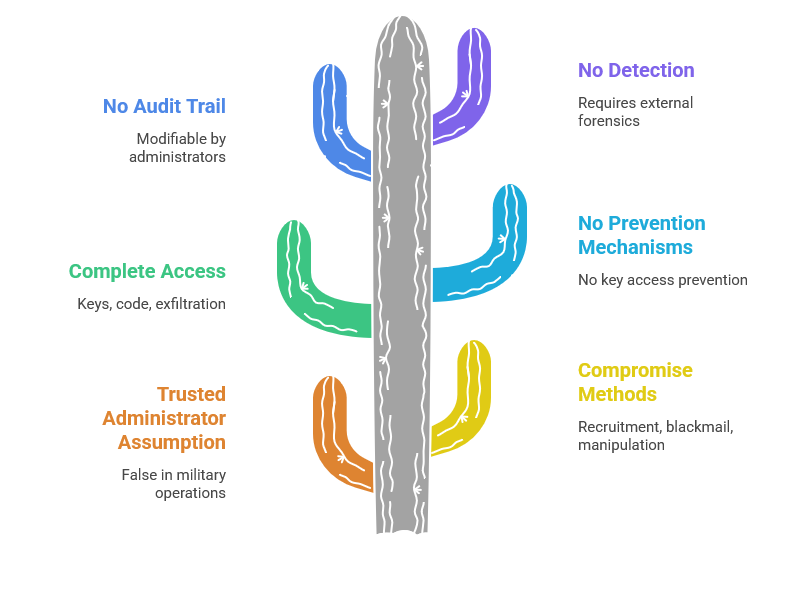

Matrix makes no serious attempt to defend against compromise of its administrative staff. This is a reasonable design decision for civilian systems where administrators are assumed to be trusted. In military operations, this assumption is false.

An intelligence service can compromise an administrator through various means: recruitment, blackmail, psychological manipulation, or exploitation of personal vulnerabilities. Once compromised, that administrator has complete access to the cryptographic keys, can modify code to introduce backdoors, can exfiltrate communications, can poison the system’s integrity guarantees.

The architecture provides no mechanism to prevent this. There is no design principle that prevents an administrator from accessing keys. There is no audit trail that cannot be modified by someone with administrative privilege. There is no way to detect a compromised administrator short of external forensics.

Alternative approaches exist where the cryptographic integrity of the system does not depend on trusting humans. These systems use fundamentally different design choices where the architecture itself enforces security constraints that administrators cannot bypass.

Human Factors in Security Design

Matrix requires significant expertise to deploy and operate correctly. The system has hundreds of configuration parameters. A single misconfiguration can create security failures that are difficult to detect. An administrator who doesn’t fully understand the federation protocol might enable insecure federation configurations. Someone who doesn’t understand the key rotation parameters might set them too aggressively or too conservatively, creating either availability problems or cryptographic risks.

This is not a criticism of the people operating Matrix. It’s a reflection of the system’s inherent complexity. When a system has 500 critical configuration choices, each with security implications, the probability that every deployment will correctly implement every choice approaches zero.

The math is straightforward. Assume 500 critical configuration parameters. Assume each has a 1 percent chance of misconfiguration. The probability that all 500 are configured correctly is 0.99 raised to the power of 500, which equals 0.006. In other words, there is a 99.4 percent probability that at least one critical misconfiguration will be present.

Matrix’s response to this problem has been to create more documentation, more training, more educational resources. But the fundamental issue remains: the system is too complex for reliable human operation. A military operation cannot afford to deploy systems that fail with 99.4 percent probability due to human error.

The Supply Chain Vulnerability

Element, the user-facing application for Matrix, depends on external infrastructure for proper operation. Updates are distributed through Google Play and the Apple App Store. The application relies on Google and Apple cryptographic services for verification. Authentication flows may depend on external identity providers.

This creates a supply chain vulnerability that has no parallel in truly sovereign systems. If Google or Apple decide to withdraw support for the application, it fails. If sanctions are imposed on a jurisdiction that restrict access to these services, the application becomes inoperable. If an intelligence agency persuades these companies to modify the update chain, the application becomes a vector for compromise.

This is not theoretical. Tech companies have restricted access to services for sanctioned jurisdictions. Companies have been compelled by court orders to modify their update mechanisms. The infrastructure that Matrix depends on is not under the control of the deploying nation and cannot be guaranteed to remain available.

Deployment Complexity and Operational Constraints

Deploying a new Matrix server requires weeks of work. It requires teams of specialists with deep understanding of the protocol, the Synapse server implementation, federation, certificate management, and countless other operational details. Once deployed, the server requires continuous monitoring and maintenance.

In tactical operations, this is untenable. A battalion operating in the field cannot wait weeks to deploy communication infrastructure. They need systems that can be stood up in hours using whatever hardware is available. They need systems that can be destroyed and redeployed as tactical circumstances change.

Matrix is not designed for this operational tempo. The supporting infrastructure requires institutional IT resources that are not always available in field conditions.

Architectural Differences

Alternative approaches make different architectural choices at every layer. The cryptographic core is fixed and stable, designed to military specifications rather than iteratively improved through open source contributions. Such systems assume some administrators are compromised and are designed to function correctly regardless. The deployment model uses minimal infrastructure and minimal interdependencies. The applications are engineered specifically for military operations and the degraded conditions they involve.

These differences are not cosmetic. They represent fundamentally different answers to the question of how to design secure communications for parties engaged in active conflict.

Conclusion

None of this is to say that Matrix is a bad system. It is excellent at what it was designed for: providing encrypted communications to privacy-conscious civilians using the internet. The problem is not with Matrix. The problem is with the expectation that a system designed for one context can simply be adopted into a completely different one.

Military communications in active conflict require a different set of priorities, different security assumptions, and different operational constraints. Pretending that a civilian communications system can be militarized through configuration alone is a category error that underestimates the fundamental differences between the two contexts.

The technical analysis detailed above is not speculation. These are properties of Matrix’s architecture that can be examined through code review, threat modeling, and operational analysis. The question is not whether these vulnerabilities exist. The question is what level of risk a military organization is willing to accept given their operational requirements.

For units that can afford Matrix’s complexity and its dependencies on external infrastructure, it may be adequate. For units operating in contested environments with limited resources and high stakes, the architectural mismatches become critical liabilities.

The conversation around military communications security should not be about whether to use Matrix or not. It should be about whether the system you deploy matches your operational environment. Understanding the technical differences is the only rational way to make that decision.

About this article: This technical analysis examines Matrix’s architectural properties in the context of military operations. For authoritative information on Matrix security, refer to official Matrix protocol documentation and third-party security research.